Journal of Fuzzy Systems and Control, Vol. 3, No 2, 2025 |

Design of Embedded Control System with Fuzzy Controller and Nonlinear Controller for the Line Follower Robot

Nguyen Xuan Chiem 1,* , Nguyen Cong Binh Nguyen 2

1,2 Department of Automation and Computing Techniques, Le Quy Don Technical University, Vietnam

Email: 1 chiemnx@mta.edu.vn, 2 nguyentutu@gmail.com

*Corresponding Author

Abstract—In this paper, an embedded system for motion control of a Line Follower Robot (LFR) is presented. Line Follower Robot can change direction by changing the relative rotational speed of the wheels, and thus does not require additional steering motion. A robot designed at the Control Systems Laboratory, Le Quy Don Technical University, is chosen as the research platform in this paper. A fuzzy logic controller has been used to ensure the smallest position and angle deviation. The rules of the fuzzy logic controller are built based on the successor's experience when considering the Lyapunov function. The output of this controller is linear velocity and angular velocity, which are the achieved values for the robot dynamics control loop. A nonlinear controller with blocked signals is synthesized based on the synergetic control theory (STC). The combination of control laws ensures the system is stable enough to measure noise and uncertainties in the robot's model and parameters. In addition, we realize that the control system is embedded. Simulation and experimental results with different scenarios demonstrate the effectiveness of the proposed control law.

Keywords—Line Follower Robot; Fuzzy Logic Controller; Lyapunov Function; Embedded System; Synergetic Control Theory

LFR has been widely used in medicine, education, industry, and entertainment in recent years. In research [1], [2], an LFR was designed to serve patients in hospitals. This robot delivers medicine to patients by following lines drawn on the floor whenever requested. LFRs are widely used in education at all levels [3]. Many competitions and games based on this robot have been developed. Another application of LFRs is the automatic transportation of equipment in industrial facilities [4]. Line detection variables are implemented based on infrared sensor modules [5]-[7]. Line following modules are widely used for most line tracking robots due to their simplicity in programming and communication and low cost. Nowadays, with the development of computer vision technology, many robots use cameras to detect lines and calculate output status values, as feedback signals in the control system [8], [9].

Control methods and controllers are commonly used on robots to enhance tracking performance. The controller is designed for this robot to follow a predetermined path by color or boundary. The control system for LFR is often built in the form of centralized control or hierarchical control. Hierarchical control includes two controllers: a kinematic controller and a robot dynamic controller. In the study [10], [11], using a PID controller to adjust the robot's motion, the results show that the proportional link value has a great influence on the tracking stability of the robot. The LQR centralized controller based on the local linearization model is presented in the study [12]. The control laws are proposed to improve the quality of line tracking when the mathematical model has nonlinear components. In the study [13], the backstepping control law is designed to overcome the uncertainty and load disturbance of the mathematical model. Based on the robot error model and using the Lyapunov method, a variable structure controller designed to ensure stable tracking performance is presented in research [14]. In research [15], [16], a hierarchical control method is used with controllers designed using the Lyapunov method and the ensemble control theory. In research [17], [18], a robust, vibration-free sliding mode controller (SMC) is designed and applied to a path-following robot. The results of this study show that the tracking quality of complex paths is accurate, and the movement speed is fast and stable.

Many research papers have used soft computing algorithms for mobile robot control in academic as well as engineering fields. Fuzzy logic is used in designing feasible solutions to perform local navigation, global navigation, path planning, steering control, and speed control of mobile robots [19], [20]. In [21]-[25], a method for tracking the path of a smart vehicle using two fuzzy controllers combined with vehicle direction control has been developed. In the study [21], a fuzzy logic controller and a regression method to find the optimal design parameters for a robot following a path with the shortest path completion time have been presented. In the study [22], a detailed design of a preference-based fuzzy behavioral system for robot vehicle navigation using a multi-valued logic framework has been presented. A fuzzy PD control law with parameter optimization using Nature-Inspired Optimization Algorithms has been presented in the study [23]. The neural network control method to overcome measurement noise is presented in research [26]. The research presented above has good simulation results, but when designing embedded systems, the control signal is limited by saturation functions, leading to limited control quality in some situations.

This study aims to overcome measurement noise factors and limit control signals on embedded systems. The kinematic control law is designed based on fuzzy logic and Lyapunov functions to overcome noise in image processing. The dynamic control law is designed based on the composite theory to limit the control signal according to the physical value of the real system. This paper is organized as follows: Section 2 presents the experimental model and mathematical model of the LFR. The synthesis of kinematic and dynamic controllers based on fuzzy theory and the STC is presented in Section 3. Section 4 presents simulation results on MATLAB software and experimental results. Conclusions and further research directions are described in Section 5.

In this paper, LFR at the Control Systems Laboratory, Le Quy Don Technical University. Is taken as the research object. The mechanical 3D structure is shown in Fig. 1.

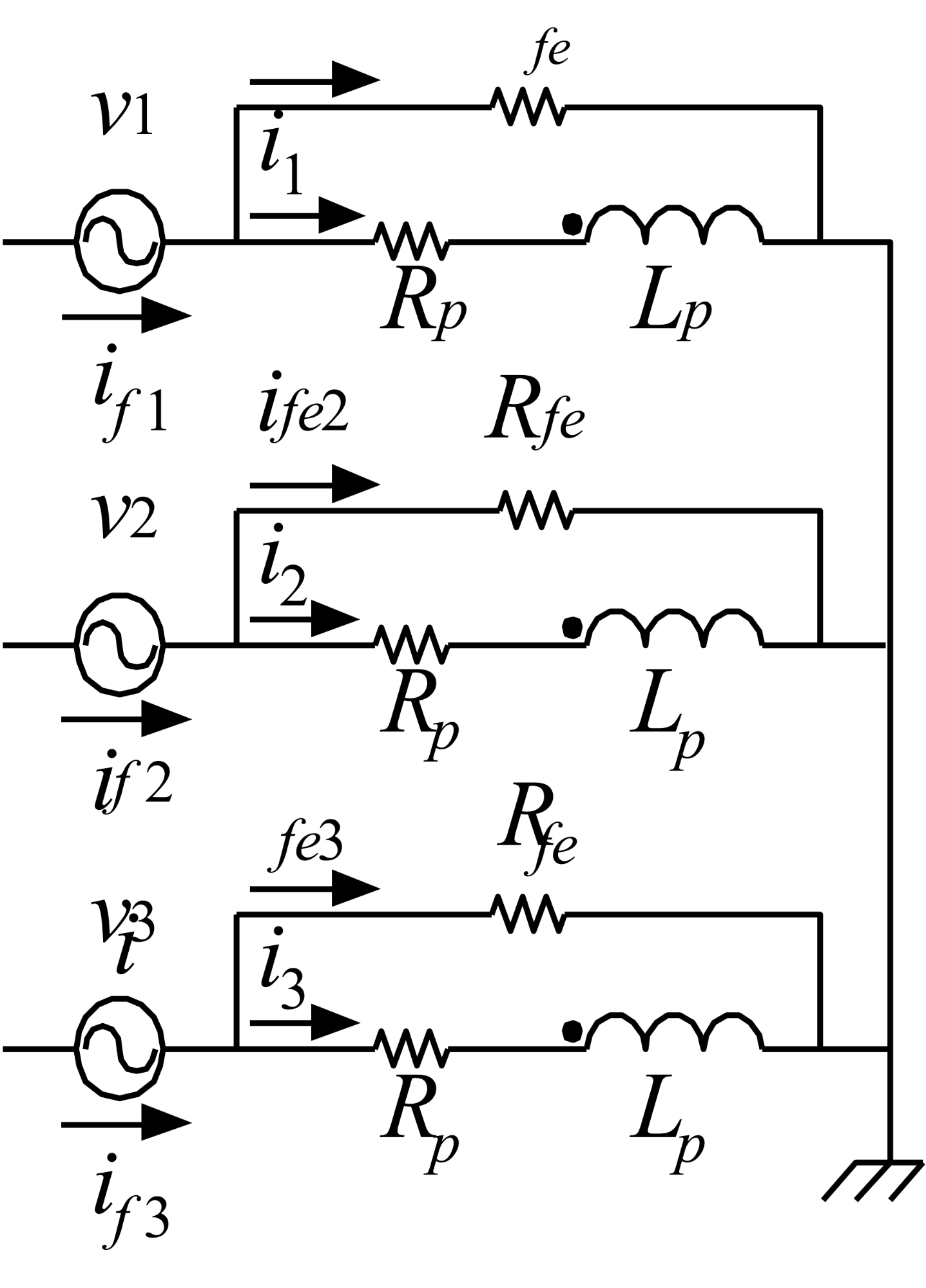

LFR is a differential two-wheel motor, two DC motors control the speed and direction of the robot by changing the speed of the two wheels. The speed of the two wheels is measured by a relative sensor mounted on the motor shaft with a resolution of 300 pulses. The Kinect Camera is connected to the Jetson Nano embedded computer, the captured images are processed, and information about the deviation distance and deviation angle of the robot is calculated. The Arduino MEGA 2560 embedded board executes the embedded control program and outputs signals to the DC Driver to control the two motors of the robot. At the same time, it receives signals from the MPU 6050 sensor modules, the encoder mounted on the robot. The robot's schematic is shown in Fig. 2.

An approximate diagram of a real-world two-wheeled robot is shown in Fig. 3 and lists the important components and distances for the model, as well as the forces considered: one vertical force and one horizontal force at each drive wheel (the forces at the rolling wheels are considered negligible). The curve σ(q) represents the path the robot needs to follow.

Considering the robot as a rigid body, moving on a plane and without slipping, its dynamics are constructed by applying the Law of Conservation of Momentum and the Law of Conservation of Angular Momentum [7]. The velocity and acceleration have been calculated in a common reference frame fixed on the floor (x’, y’), but the vectors are expressed in terms of the x-y motion basis, which is fixed on the robot and has its own angular velocity. Both basic coordinate systems are shown in Fig. 3. The dynamics model presented in the studies [9], [27] is as follows:

| (1) |

where d is the distance from the sensor axis to the line (m); θe is defined as follows  is the moment of inertia of the robot along the vertical axis (kg.m²), Bf is the total coefficient of friction between the rotor and the wheel

is the moment of inertia of the robot along the vertical axis (kg.m²), Bf is the total coefficient of friction between the rotor and the wheel  is the angular velocity of the robot

is the angular velocity of the robot  with

with  being the voltage applied to the motors respectively

being the voltage applied to the motors respectively  is the radius of the wheel

is the radius of the wheel  is the linear velocity of the robot

is the linear velocity of the robot  is the curvature of the line,

is the curvature of the line,  is the mass of the robot

is the mass of the robot  .

.

In the study to determine  and

and  , the camera was used to determine the line. The technique to determine the blue line is based on feature analysis. The purpose of this approach is to try to highlight the difference between the observed line image and the image without a line. This technique is based on the characteristics of the image, from the captured images through color filters, analysis, and giving the characteristics of the lane. The OpenCV-Python library in the Ubuntu operating system is used to implement this technique. After finding the line in the image, the values of the deviation angle

, the camera was used to determine the line. The technique to determine the blue line is based on feature analysis. The purpose of this approach is to try to highlight the difference between the observed line image and the image without a line. This technique is based on the characteristics of the image, from the captured images through color filters, analysis, and giving the characteristics of the lane. The OpenCV-Python library in the Ubuntu operating system is used to implement this technique. After finding the line in the image, the values of the deviation angle  and the distance

and the distance  of the robot from the line are calculated. The value of the deviation angle

of the robot from the line are calculated. The value of the deviation angle  is the angle between the main contour in the image and the vertical axis of the image. Use the

is the angle between the main contour in the image and the vertical axis of the image. Use the  function, which will return information about the rectangle surrounding the border, including the angle between the rectangle and the horizontal axis of the image. after some simple calculations, we can find the deviation angle

function, which will return information about the rectangle surrounding the border, including the angle between the rectangle and the horizontal axis of the image. after some simple calculations, we can find the deviation angle  easily. About the distance

easily. About the distance  , we can calculate it by counting the number of pixels from the vertical axis in the middle of the image to the center of the rectangle surrounding the border. From that number of pixels, we can calculate the distance in meters by experimentally measuring how many meters one pixel on the image is because the distance and viewing angle from the camera are unchanged. Fig. 4 illustrates how to calculate

, we can calculate it by counting the number of pixels from the vertical axis in the middle of the image to the center of the rectangle surrounding the border. From that number of pixels, we can calculate the distance in meters by experimentally measuring how many meters one pixel on the image is because the distance and viewing angle from the camera are unchanged. Fig. 4 illustrates how to calculate  and

and  on the image and the result after calculation and image processing.

on the image and the result after calculation and image processing.

,

,  after image processing

after image processingThe linear velocity  is determined by two relative encoder sensors attached to the right and left motor shafts. Two external interrupts are used to count the number of motor pulses in a period of 100 (ms). The motor's translational velocity is the average of the total translational velocity of the two wheels. The program to calculate the velocity vx has the following form:

is determined by two relative encoder sensors attached to the right and left motor shafts. Two external interrupts are used to count the number of motor pulses in a period of 100 (ms). The motor's translational velocity is the average of the total translational velocity of the two wheels. The program to calculate the velocity vx has the following form:

The robot’s angular velocity  is determined through the MPU6050 sensor by determining the angular velocity around the vertical z-axis. The angular velocity nebula program

is determined through the MPU6050 sensor by determining the angular velocity around the vertical z-axis. The angular velocity nebula program  (GyroZ) has the following form:

(GyroZ) has the following form:

Where 3.90 is the velocity calibration at the sensor's zero point.

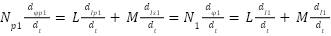

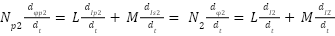

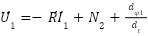

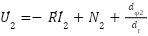

The robot controller consists of two components: the kinematic controller and the dynamic controller. First, the Kinematic controller is synthesized based on the kinematic equation. From equation (1), we use the first two equations to synthesize the control law:

| (2) |

Select the candidate Lyapunov function as follows:

| (3) |

From the derivative of the Lyapunov function, we get:

| (4) |

To ensure stability according to Lyapunov with the values of  within the given limits. We synthesize a structured fuzzy controller with 2 input variables

within the given limits. We synthesize a structured fuzzy controller with 2 input variables  and 2 output variables

and 2 output variables  . These two values Select the linguistic variables as follows: the distance deviation d (Big negative, Negative, Zero, Positive, Big positive); the angle deviation

. These two values Select the linguistic variables as follows: the distance deviation d (Big negative, Negative, Zero, Positive, Big positive); the angle deviation  (Big negative, Negative, Zero, Positive, Big positive) is defined as in Fig. 5 and Fig. 6. Based on the analysis experience, the control law of the controller is designed to include 25 IF-THEN rules listed in Table I, and Table II. The defuzzification method of the fuzzy controller is calculated according to the defuzzification principle of the Tagaki-Sugeno fuzzy model.

(Big negative, Negative, Zero, Positive, Big positive) is defined as in Fig. 5 and Fig. 6. Based on the analysis experience, the control law of the controller is designed to include 25 IF-THEN rules listed in Table I, and Table II. The defuzzification method of the fuzzy controller is calculated according to the defuzzification principle of the Tagaki-Sugeno fuzzy model.

ωr | θe | |||||

NB | N | Z | P | BP | ||

d | BN | 0.0732 | 0.0319 | -0.0094 | -0.0506 | -0.0919 |

N | 0.0778 | 0.0366 | -0.0047 | -0.0459 | -0.0872 | |

Z | 0.0825 | 0.0413 | 0 | -0.0413 | -0.0825 | |

P | 0.0872 | 0.0459 | 0.0047 | -0.0366 | -0.0778 | |

BP | 0.0919 | 0.0506 | 0.0094 | -0.0319 | -0.0732 | |

vr | θe | |||||

NB | N | Z | P | BP | ||

d | BN | 0.7149 | 0.3598 | 0.0047 | -0.3504 | -0.7056 |

N | 0.3570 | 0.1791 | 0.0012 | -0.1768 | -0.3547 | |

Z | 0.0015 | 0.00075 | 0 | 0.00075 | -0.0015 | |

P | -0.3517 | -0.1753 | 0.0012 | 0.1776 | 0.3540 | |

BP | -0.7026 | -0.3489 | 0.0047 | 0.3583 | 0.7119 | |

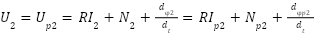

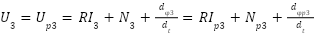

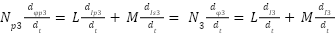

Synthesizing the dynamic controller when the input is limited, from equation (1) we use the last two equations combined with expanding the phase space of the system to calculate the additional input condition [16] as follows.

| (5) |

In which, U0 is the maximum value of the voltage signal supplied to two motors at the same time on the real system, or  and

and  are positive constants.

are positive constants.

According to the requirements of the LFR problem, the motor moves with a given translational velocity  and an angular velocity of

and an angular velocity of  . We choose the technology invariant corresponding to the control objective as follows:

. We choose the technology invariant corresponding to the control objective as follows:

| (6) |

The control law synthesis manifolds are selected as follows:

| (7) |

According to the ADAR method, the macro variables ψv and  must satisfy the solution of the system of basis function equations:

must satisfy the solution of the system of basis function equations:

| (8) |

From equations (7) and (8), we have:

| (9) |

Solving the system of equations (9), we get the virtual control signals  and

and  as follows:

as follows:

| (10) |

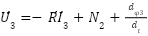

The real control signal supplied to the motor is calculated according to the formula:

| (11) |

The robot model is designed with hardware with model parameters determined relatively as follows:  . The simulation scenario is conducted with the condition that the robot will follow a path with a fixed small curvature

. The simulation scenario is conducted with the condition that the robot will follow a path with a fixed small curvature  , and the robot's translational velocity is stable at

, and the robot's translational velocity is stable at  . The initial values of the robot are as follows:

. The initial values of the robot are as follows:  . Parameters of the control law:

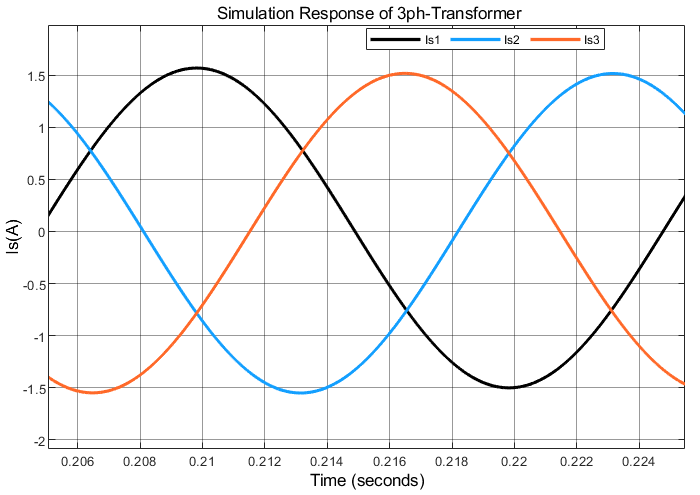

. Parameters of the control law:  . The simulation results performed with the proposed control law are shown in Fig. 7, Fig. 8, and Fig. 9. From the results, we can see that the proposed control law ensures that the robot follows the desired path. Fig. 7 shows the error in distance and angle approaching 0 after 7 (s). The translational velocity and angular velocity of the robot approaching the desired value after 4 (s) are shown in Fig. 8. Fig. 9 shows that the voltage value supplied to the motor is less than

. The simulation results performed with the proposed control law are shown in Fig. 7, Fig. 8, and Fig. 9. From the results, we can see that the proposed control law ensures that the robot follows the desired path. Fig. 7 shows the error in distance and angle approaching 0 after 7 (s). The translational velocity and angular velocity of the robot approaching the desired value after 4 (s) are shown in Fig. 8. Fig. 9 shows that the voltage value supplied to the motor is less than  .

.

Experimental research on LFR has been carried out to study the effectiveness of the proposed control algorithm. The trajectory tracking experiment for the experimental LFR presented in Section 2 was carried out based on the Arduino embedded board. The experimental scenario for the robot to follow a curved line. The experimental results are shown in Fig. 10, Fig. 11, and Fig. 12. From the results in Fig. 10, it can be seen that the LFR has followed the line with acceptable error. Obviously, at the bend points, the robot moves with the largest distance error of 0.05 (m) at 6.6 (s), the angle error  changes rapidly due to the bend of the line. The linear velocity of the robot reaches the set value of 0.4 (m/s) after more than 6 (s) and the angular velocity changes rapidly when entering a bend (Fig. 11). The visual test results of the tracking performance of the two controllers are shown in Fig. 12. In which the blue line is the line to be tracked and the red line is the robot's center of gravity displacement line. From the figures, it can be seen that the robot's line tracking result is quite good, but there is still some deviation.

changes rapidly due to the bend of the line. The linear velocity of the robot reaches the set value of 0.4 (m/s) after more than 6 (s) and the angular velocity changes rapidly when entering a bend (Fig. 11). The visual test results of the tracking performance of the two controllers are shown in Fig. 12. In which the blue line is the line to be tracked and the red line is the robot's center of gravity displacement line. From the figures, it can be seen that the robot's line tracking result is quite good, but there is still some deviation.

The purpose of this study is to design a successful embedded controller for line-following robots. The LFR experimental model is designed in a computer vision laboratory to detect lines. The control law for the robot is designed to include two serial controllers, and the dynamic control law is built based on fuzzy theory through Lyapunov functions. The dynamic control law with bounded inputs is designed based on the ADAR method and the expansion of the dynamic equation. The proposed control law ensures the robot is robust to input disturbances and performs efficiently on embedded systems. Simulation and experimental results have verified the effectiveness of the proposed control law. In addition, we also see that the choice of control law parameters greatly affects the control quality. In the following studies, the authors will present controllers when the system has noise and uncertainty factors to overcome these disadvantages.

Nguyen Xuan Chiem, Design of Embedded Control System with Fuzzy Controller and Nonlinear Controller for the Line Follower Robot